Newsletter Subscribe

Enter your email address below and subscribe to our newsletter

Enter your email address below and subscribe to our newsletter

Learn, Tech & AI

A few years ago, our team spent an entire holiday weekend hunting down a single vulnerable open-source library buried deep in our server infrastructure. We were racing against the clock, manually scanning logs and patching servers to prevent a potential breach. It was a stressful, exhausting nightmare that highlighted a massive flaw in modern tech: we rely on underfunded open-source projects, but lack the resources to secure them effectively.

That exact problem is why the cybersecurity and developer communities are currently buzzing about Anthropic’s latest AI project.

If you are trying to keep up with the latest AI innovations 2026 has to offer, you have likely seen the headlines. But exactly what is Anthropic Project Glasswing?

Simply put, it is an urgent, highly coordinated initiative launched by Anthropic to identify and patch cybersecurity vulnerabilities in critical open-source software before bad actors can exploit them.

Instead of just building another chatbot, Anthropic is deploying its AI safety models to act as a proactive defense system. Below, we break down seven critical things you need to know about Anthropic Project Glasswing, how it works, and why it matters for your tech stack.

Here’s what it actually is, why it exists, and the seven things that matter most right now.

Anthropic Project Glasswing is a 2026 cybersecurity initiative that uses Claude Mythos Preview — an advanced, unreleased AI model — to find and fix critical software vulnerabilities in major operating systems, browsers, and open-source infrastructure. It involves partners including Google, Microsoft, AWS, and Apple, backed by $100M in model usage credits. Here are 7 things to Know About Anthropic’s Project Glasswing

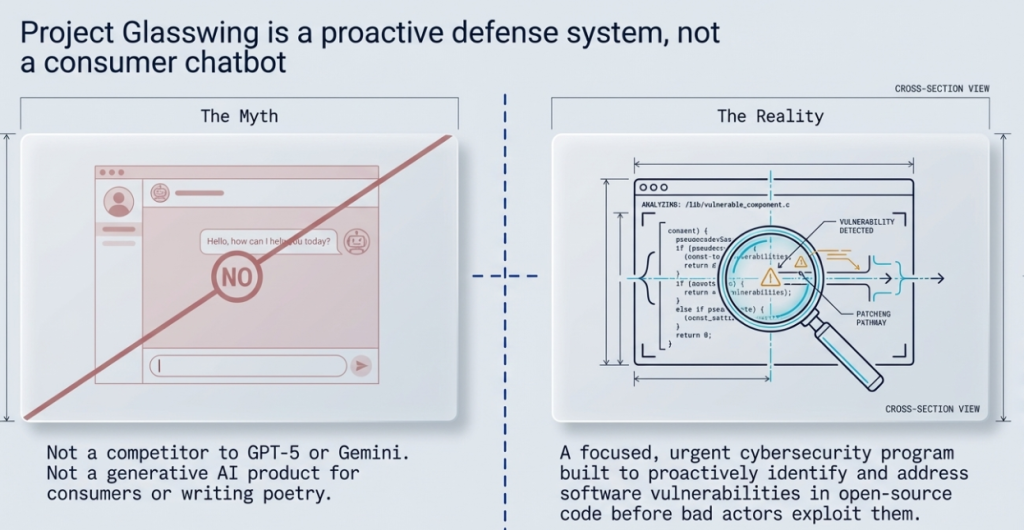

Let’s clear up the most common misconception first.

Project Glasswing isn’t a competitor to GPT-5 or Gemini. It’s not a generative AI product for consumers. It’s a focused cybersecurity program built to identify and address software vulnerabilities in open-source code — the kind of code that quietly powers everything from hospitals to banking systems to government infrastructure.

Anthropic describes it as an urgent response to the growing risk that AI-driven cyberattacks pose to critical software. Rather than waiting for vulnerabilities to be discovered by bad actors, Glasswing aims to find them proactively using AI’s ability to analyze code at a scale and speed no human team can match.

This matters because open-source software is everywhere. When a vulnerability slips through — think Log4Shell in 2021 — the fallout can be catastrophic and global. Project Glasswing is designed to reduce the odds of the next incident like that.

This isn’t a solo effort. One of the most significant details about Project Glasswing is the coalition behind it.

According to reporting from Firstpost and announcements from the Linux Foundation, Anthropic has teamed up with major players including Apple and Google to support this initiative. The Linux Foundation’s involvement is particularly telling — it signals that Project Glasswing is designed with open-source maintainers in mind, not just corporate interests.

For anyone tracking AI research companies and their strategic moves, this collaboration is significant. It positions Anthropic not just as a model builder but as an infrastructure-level player in AI safety — one that major tech companies trust enough to partner with on a sensitive, high-stakes project.

Open-source maintainers are often under-resourced. Many critical packages are maintained by small teams or even individuals. Glasswing gives these maintainers access to advanced AI tools they couldn’t build or afford on their own — tools that can flag dangerous code patterns, suggest fixes, and triage security issues faster.

Here’s where the Glasswing AI capabilities become concrete.

Traditional code auditing relies on a mix of automated scanning tools (like SAST and DAST tools) and manual review by security engineers. Both approaches have gaps. Automated scanners produce false positives. Human reviewers miss things under time pressure, especially in massive codebases.

Project Glasswing applies large language models — the same underlying technology behind Claude — to understand code contextually, not just syntactically. That means it can catch vulnerabilities that depend on how different parts of a program interact, not just obvious bugs in a single function.

This is a meaningful step beyond what conventional tools offer. It’s also why Anthropic’s specific expertise in building AI safety models makes them well-suited for this kind of work. They’ve spent years thinking about how AI systems behave under edge conditions — exactly the kind of reasoning needed to find subtle security flaws.

| Approach | Strengths | Weaknesses |

|---|---|---|

| Traditional scanners (SAST/DAST) | Fast, scalable | High false-positive rates, misses contextual bugs |

| Manual code review | High precision, contextual | Slow, expensive, doesn’t scale |

| Project Glasswing (AI-assisted) | Contextual understanding at scale | New, unproven at full scale, depends on model accuracy |

No one is claiming Glasswing replaces human security researchers. But as a force multiplier, it has genuine potential.

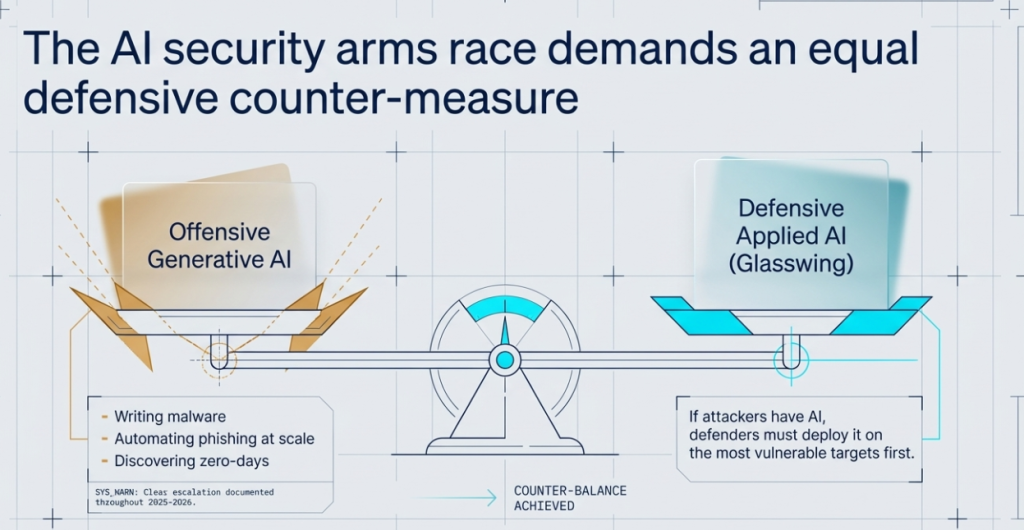

Anthropic didn’t launch this project in a vacuum.

Throughout 2025 and into 2026, security researchers have documented a clear escalation in AI-powered cyberattacks. Attackers are using generative AI to write malware, automate phishing at scale, and discover zero-day vulnerabilities faster than defenders can patch them.

Project Glasswing is framed as a counter-move against the offensive use of AI in cybersecurity. The idea is straightforward — if attackers have AI, defenders need it too, and they need it deployed on the most vulnerable targets first.

This is where the project intersects with broader generative AI trends. The same technology that makes Claude useful for writing and research also makes AI dangerous in the wrong hands. Glasswing is, in a sense, Anthropic’s attempt to ensure the defensive side keeps pace.

Project Glasswing isn’t trying to audit every GitHub repository on earth. Its scope is intentionally narrow: critical open-source software — the packages and libraries that underpin essential infrastructure.

Think of projects like OpenSSL, the Linux kernel, core networking libraries, and widely-used dependencies that millions of applications rely on. A vulnerability in one of these packages can cascade across entire industries.

This focus is smart. Trying to scan everything would dilute the effort and likely produce less useful results. By concentrating on high-impact targets, Glasswing can deliver the most value where the risk is greatest.

For enterprise AI tools buyers and security teams, this also signals something useful: the project’s outputs are most relevant if your organization depends on widely-used open-source components (and most do, whether they realize it or not).

Whenever a large AI company enters the open-source space, there’s reasonable skepticism. Open-source communities have been burned before by corporations that extract value without giving back.

A thread on Reddit’s r/cybersecurity captures this dynamic well. Some commenters welcomed the initiative, pointing out the dire need for better security tooling for underfunded projects. Others raised questions about data handling — specifically, whether Glasswing’s AI models would be trained on proprietary or private code, and what that means for open-source licensing.

Anthropic’s official page and LinkedIn announcement position the project as collaborative and open, with an emphasis on giving tools to maintainers rather than replacing them. But the proof will be in the execution — particularly around transparency, data governance, and how much maintainers actually benefit in practice.

These are fair questions. And how Anthropic answers them over the coming months will determine whether Glasswing earns lasting trust in the open-source ecosystem.

This is perhaps the most important takeaway for anyone following the future of AI models and the competitive landscape.

While the Anthropic vs. OpenAI narrative usually centers on chatbot performance and benchmark scores, Glasswing reveals a different strategic direction. Anthropic is investing in applied AI safety at the infrastructure level — using its technology to address real-world risks, not just build consumer products.

That distinction matters. OpenAI has moved aggressively into consumer and enterprise products. Google is integrating Gemini across its ecosystem. Anthropic, with Glasswing, is carving out a niche as the company that takes AI’s second-order risks seriously — and builds tools to mitigate them.

This doesn’t mean Anthropic is abandoning Claude or stepping back from the AI model updates race. Far from it. But Glasswing shows that their roadmap includes more than just bigger models. It includes using those models to solve problems that most AI companies aren’t even attempting.

For people comparing upcoming AI technologies and deciding which companies to watch, that’s a signal worth noting.

As of now, Anthropic hasn’t published a formal public release date for all of Glasswing’s tools. The project is live and actively being deployed with partner organizations and select open-source maintainers, but a full, broadly accessible rollout hasn’t been scheduled publicly.

If you’re searching for the Anthropic Project Glasswing release date, the honest answer is: it’s already operational in a limited capacity, and broader availability will likely depend on how the initial deployments perform and what feedback Anthropic receives from maintainers and partners.

Keep an eye on Anthropic’s official channels and the Linux Foundation for updates.

Project Glasswing isn’t something you’ll download next week. It’s not a Claude competitor you can test against GPT-5.

It’s Anthropic’s most practical move yet: using its best AI not to write poems, but to quietly patch the digital world. For developers, security teams, and anyone

How do you think AI initiatives like Project Glasswing will impact your team’s workflow? Drop your thoughts in the comments below, and let’s keep the conversation going here at TechGuruShiksha.